(~2000 words)

If you generated a random directed graph, you could tally facts about the vertices and edges, but it might be more interesting to determine whether the graph has a cycle. We find ourselves in a vast World filled with many objects coming into being (including ourselves), each one potentially participating in the creation of many others. If we built a directed graph pointing from catalysts to products, we could tally facts about the individual catalysts and products and reactions, but it might be more interesting to find the catalytic cycles within this graph.

Hypercycles and replicators

This has been done before. “Hypercycle” was a term coined in the abiogenesis literature in the 1970s to refer to a complete loop of molecules wherein each molecule is a catalyst for the next in the loop. The hypercycle is one of many examples of positive feedback in chemistry, but it is unique in that the variables subject to feedback are purely the abundances of each chemical species in a set, and no others like temperature or pressure. Because the concept depends only on numeric counts, it is totally generalizable beyond chemistry. Thus we could say that a hypercycle is any complete loop of entities, from the atomic to the astronomical, wherein each is a catalyst for the next in a loop. The important thing to note is that hypercycles are merely likely to exist wherever there is a robust ecology of catalysts, just like cycles are likely to exist in any sufficiently dense directed graph, and that a hypercycle running through a set of entities is an independent entity from those entities, just like a cycle in a directed graph is distinct from vertices and edges themselves.

A replicator is a special case of a hypercycle. Or rather, I am going to define it as a special case of a hypercycle, in a way that concords with most usages and connotations of the term “replicator,” even though many users are unaware that it could be a special case of something else. A replicator is a hypercycle whose directed graph is the simplest possible cyclic graph: one vertex with one edge starting and ending on the vertex. You could even say that replicators are the degenerate case and “qualitatively different from” the broader class of hypercycles. At least with non-degenerate hypercycles we can imagine complicated tangles of catalytic loops, wherein each selfsame catalyst might participate in more than one loop, but with replicators there is no participatory multiplicity, there is only pure selfishness and self-participation. If life originated as one or more large hypercycles that iteratively tightened into replicators by chopping or merging links in the cycle, then searching for the origin of life in the simplest possible replicator (e.g. by finding a minimal genome) may be totally misguided because the first life may not even have been a replicator.

Catalysis and watches

What is catalysis? A catalyst is something that greatly speeds up the creation of something else in a milieu furnishing the relevant resources, and is not itself consumed in the process (at least not stoichiometrically: it may wear out eventually). In our Universe, quantum fluctuations can produce anything: disembodied [Boltzmann] brains, Boeing 747s, watches. Anything large is so unlikely, however, that it would take many eons vastly longer than our Universe’s 13.7 billion years to produce it. But since it is possible with a probability strictly greater than 0, we can say things like “you can have a watch without a watchmaker, but a watchmaker is a catalyst that enormously speeds up the reaction.” Thus we can see a Universe where anything is possible, but the actual entities that exist continuously morph what is probable via their catalytic (and anti-catalytic) properties, and we see hypercycles as clever circularities that hack the probability-generating process of the Universe: every individual thing is so unlikely that anything unhacked has virtually no chance.

Some Notation

It’s worth creating some notation for hypercycles. Let’s denote a replicator as  , “the entity A directly participates in the catalysis of [other entities of the same type as] itself.” A slightly more complicated hypercycle could be

, “the entity A directly participates in the catalysis of [other entities of the same type as] itself.” A slightly more complicated hypercycle could be  , “A catalyzes B which catalyzes C which catalyzes A again” (equivalent to

, “A catalyzes B which catalyzes C which catalyzes A again” (equivalent to  and

and  ).

).

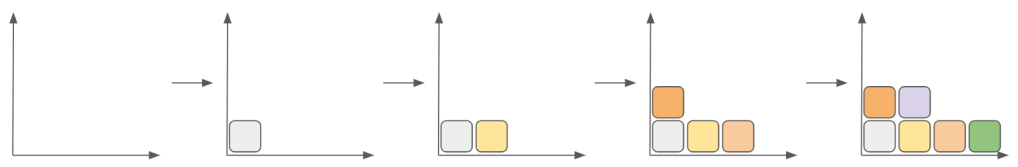

A simple circle of catalysts is the most straightforward topology of a hypercycle. There are two complicating considerations I’d like to add, which are novel as far as I know although I have not searched the literature exhaustively. The first consideration is that a molecule (or entity in general) may have multiple forms, such that  catalyzes

catalyzes  , which cannot catalyze

, which cannot catalyze  without first changing to conformation

without first changing to conformation  , which can. In that case,

, which can. In that case,  violates the definition of a catalyst because it does get consumed [stoichiometrically 1:1] in the creation of

violates the definition of a catalyst because it does get consumed [stoichiometrically 1:1] in the creation of  . We can expand the notation to write that as:

. We can expand the notation to write that as:  . The second consideration is that it is possible for a hypercycle to have a bifurcation. Molecule

. The second consideration is that it is possible for a hypercycle to have a bifurcation. Molecule  may catalyze both

may catalyze both  and

and  , each of which continues to catalyze its own sequence of molecules, with the two sequences getting to, say, molecules

, each of which continues to catalyze its own sequence of molecules, with the two sequences getting to, say, molecules  and

and  (or

(or  and

and  if there’s fewer in one of the two sequences), both of which must be together to catalyze

if there’s fewer in one of the two sequences), both of which must be together to catalyze  , which finally leads back to

, which finally leads back to  , closing the bifurcation of the hypercycle. We can expand the notation one more time to write that as:

, closing the bifurcation of the hypercycle. We can expand the notation one more time to write that as:  .

.

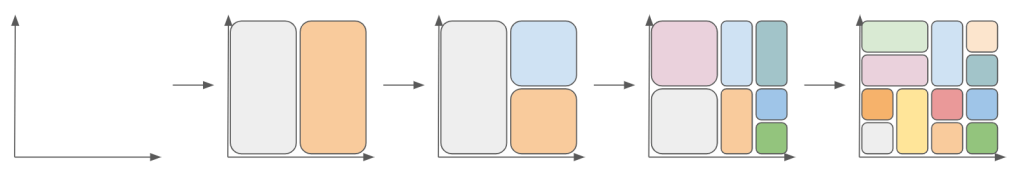

Multicellular organisms are rarely replicators

It may be surprising, but multicellular organisms are rarely replicators. That is, they are not  . Near exceptions include the New Mexico whiptail lizard,

. Near exceptions include the New Mexico whiptail lizard,  (see development and asexual reproduction), among others. In contrast, all mammals are

(see development and asexual reproduction), among others. In contrast, all mammals are  (see sex). Ferns are

(see sex). Ferns are  (see alternation of generations). Willows are

(see alternation of generations). Willows are  (see dioecy). Jellyfish are

(see dioecy). Jellyfish are  (see jellyfish life cycle). These could be even more complicated if we detailed gametogenesis and monozygotic twinning.

(see jellyfish life cycle). These could be even more complicated if we detailed gametogenesis and monozygotic twinning.

An exhaustive search

We may not have totally exhausted the hypercycles of conventionally-defined biological species, but the above is a solid start which covers all of the organisms most people know about. Let’s think beyond conventionally-define biological species. Does any single hypercycle flow through more than one species? Do the members of any species catalyze the members of another species which in turn catalyze them back? Certainly. Mutualism in all its forms is an example of this. You can draw a hypercycle that loops from yucca moths to yuccas and back, for example. In some instances, the symbiotic organisms engulfed each other, such as eukaryotes and their mitochondria, together becoming a new species (see symbiogenesis). A yucca plant and its moth will probably never be considered a single organism by science, but perhaps they should be, like two sexes of the same species.

How about beyond biology? Are there any hypercycles in astronomy? In the cosmological natural selection hypothesis, Universes are  if each black hole pops out one Universe, but

if each black hole pops out one Universe, but  if each black hole can pop out many. There can be a hypercycle through supernovas and stars since supernovas can trigger the collapse of nearby gas clouds into new stars that can themselves go nova.

if each black hole can pop out many. There can be a hypercycle through supernovas and stars since supernovas can trigger the collapse of nearby gas clouds into new stars that can themselves go nova.

Brains, culture, and macro abiogenesis

How about in human culture? A simple tool like a stone to crack a nut has a certain probability of being generated spontaneously in a human milieu—even monkeys have been observed with such a tool. Both the physical hand-stone-nut assemblage and the in-brain concept of the hand-stone-nut assemblage have a certain probability of being generated, but the concept is generated much more quickly when a human observes another human enacting the hand-stone-nut assemblage, and the behavior is generated much more quickly when the concept has been learned. Importantly, many humans can observe another human at the same time, so we have  , and once the concept has been learned, a human can enact it many times, so we also have

, and once the concept has been learned, a human can enact it many times, so we also have  and thus together

and thus together  , a hypercycle with two entities.

, a hypercycle with two entities.

The situation gets more complicated when we consider tools that make other tools. The axe hews all of the canoe, the spear, the fence, and the thatch. One axe can hew many canoes, many spears, many fences, or many thatches, so we have  ,

,  ,

,  ,

,  . Canoes, spears, fences, and thatches provide for the flourishing of human life, which re-creates the conditions to perpetuate itself, like the axe, so we also have, slightly hand-wavy,

. Canoes, spears, fences, and thatches provide for the flourishing of human life, which re-creates the conditions to perpetuate itself, like the axe, so we also have, slightly hand-wavy,  and the rest, completing the hypercycles. Thus the bulk of cultural artifacts are not replicators: they are not

and the rest, completing the hypercycles. Thus the bulk of cultural artifacts are not replicators: they are not  . The directed graph of catalysis in the human economy is vast and incredibly dense. Pencils, CPUs, billboards, beds catalyze myriads of other entities, including themselves, after many indirect deferrals. Drawing the hypercycle of any would take years of research and probably end up including most of the economy. The hypercycles of most of these even include each other, such that the “hypercycle of X” is a misnomer to the extent that it unfairly highlights one specific catalyst in a cycle of many.

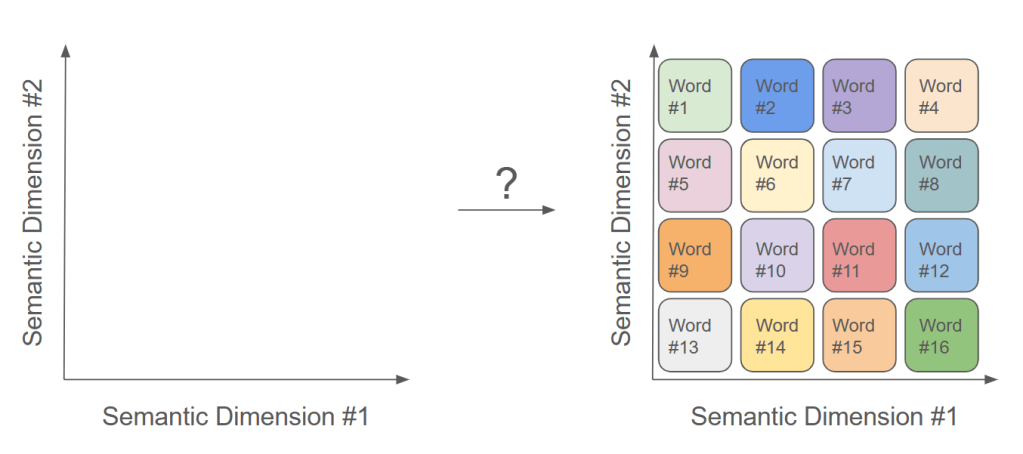

. The directed graph of catalysis in the human economy is vast and incredibly dense. Pencils, CPUs, billboards, beds catalyze myriads of other entities, including themselves, after many indirect deferrals. Drawing the hypercycle of any would take years of research and probably end up including most of the economy. The hypercycles of most of these even include each other, such that the “hypercycle of X” is a misnomer to the extent that it unfairly highlights one specific catalyst in a cycle of many.

Our economy may be hypercyclically similar to those warm, abiogenetic pools busy with molecules. If every molecule catalyzed lots of others, it was virtually inevitable that one or a few hypercycles would run away with all of the probability-generating capacity, with all of the other edges and vertices in the directed graph withering as one or a few cycles flowed around and around, drawing everything else in. There might end up being hypercycles in the economy, initially somewhat random series of entities making other entities, that similarly find their own tail and runaway with the whole surface of the Earth. Some say that this has already happened.

Epilogue: Consciousness vs. Pure Replicators

Almost all agents exist in an advanced milieu that made it probable for them to come into being. The premise of the article Consciousness vs Pure Replicators is that there are two broad categories of motivation that drive agents: 1) the intrinsic value of conscious experiences which are knowable by agents (and formalizable mathematically) and 2) the forces of “replication” that use conscious matter merely in the service of “replication.” It should be clear why I placed the word in scare-quotes: replicators are actually quite rare, because most “replication” is the iteration of a hypercycle that is indeed larger than  . I’m not saying that the article makes that mistake, but that that is the ordinary but careless connotation of “replicator,” which I tried to capture as I alluded above when I defined it as the degenerate case of hypercycles.

. I’m not saying that the article makes that mistake, but that that is the ordinary but careless connotation of “replicator,” which I tried to capture as I alluded above when I defined it as the degenerate case of hypercycles.

The distinction matters because an agent that is concerned with replicators is likely to overfocus on circumscribable objects in the World and categorize them as “replicators” or “not-replicators,” which is much like trying to find cycles in a directed graph by looking at one vertex or edge at a time, rather than to study the landscape of catalysis and production in the World broadly, especially as they touch the agent itself. The important insight that might be missed is that anything can be in a hypercycle, even if it wasn’t meant to be, and crucially that hypercycles flow through you, with many of them not so simple as circling from brain to physical manifestation and immediately back to brain (i.e. “memes” as popularly conceived:  ) but rather long arcs that course through substantial portions of the entire World. If consciousness is to win, it must know what it is fighting.

) but rather long arcs that course through substantial portions of the entire World. If consciousness is to win, it must know what it is fighting.